At Kikoff, experimentation is at the center of how we build and ship. Every ship decision goes through an experiment. And every experiment goes through a peer review — a data scientist not involved in the project examines the readout as an independent reviewer before any ship decision is made, just like code reviews for engineering.

The process works, catching real issues, but doesn’t scale well as our experiment velocity catches up.

A thorough review requires cross-referencing Statsig (Kikoff’s third-party partner for experimentation), validating exposure counts in Snowflake, checking dozens of guardrail metrics, recomputing revenue projections, and verifying that the codebase implementation matches the targeting rules. A single review can take 30 minutes to an hour.

We built the AI Experiment Reviewer to automate the mechanical parts of this process — an agentic system powered by Claude that runs a structured, multi-agent peer review and posts its findings directly to the experiment's Notion doc.

In this post, we break down the system's architecture, walk through each agent's role, and share what we learned building it.

Architecture: three agents, one orchestrator

The AI experiment review essentially breaks down a Data Scientist role into 3:

A Statistician, A Data engineer, and a Business analyst.

The system is implemented as a Claude skill — a structured prompt and workflow definition that lives in our data-monorepo under .claude/skills/ai_experiment_review/. The orchestrator coordinates a pipeline of context gathering, pre-filtering, and parallel agent execution.

It connects to external systems via MCP (Model Context Protocol) servers:

- Notion MCP — fetches experiment writeups, posts structured review comments

- Statsig MCP — pulls experiment config, group allocation, and metric results

- Snowflake MCP — queries exposure counts, daily intake, and metric trends

- Metabase — creates exposure reconciliation charts via the

/metabaseskill - Git submodules — provides access to the backend, frontend, and mobile codebases

Claude's Task tool spawns sub-agents that run in parallel. The architecture breaks into three layers:

- Pre-filter stage — assembles the full review context

- Parallel execution — three specialized agents each tackle a different review dimension

- Output — synthesizes findings and posts structured Notion comments with sequencing logic

.png)

Pre-filter: assembling the context bundle

Before any review agent runs, the orchestrator gathers everything needed for a complete review. This stage has two parts: data collection and classification.

Data collection pulls from five sources simultaneously from Notion, Statsig, Snowflake, Metabase, and Github.

Classification is handled by an Engineer agent — a sub-agent dispatched to grep through the codebase and determine who is actually exposed to the experiment. It inspects the experiment platform's targeting rules and cross-references them against code paths to produce three outputs:

- Platform — web, mobile, or both (determined by targeting rules, not just where code exists)

- Experiment type — onboarding vs. non-onboarding (determined by where the flag is checked in the code, not by the flag name)

- Version requirement — extracted from platform targeting rules, not inferred from git history

This classification is critical. It determines which SQL pattern the orchestrator uses for exposure reconciliation, and it shapes how downstream agents interpret cohorted retention metrics (D1/D7/M1/M2/M3).

The pre-filter step also attaches company-specific metric definitions — terms that differ from industry defaults. For example, at many companies "DAU" might mean session-based engagement, but internally it could mean something entirely different like active subscriber status. Without these definitions, agents misinterpret domain-specific terminology. One line of context eliminated an entire class of false flags.

Agent 1: the Statistician

The Statistician validates the experiment's statistical rigor. It runs four checks and outputs a concise pass/fail checklist — passes get one sentence; failures get a specific explanation.

.png)

What it checks:

- Health checks — sample ratio mismatch, pre-experiment bias, and other Statsig-reported diagnostics

- Duration — minimum 2 weeks for standard experiments, 1 month if retention impact is claimed

- Sample size — whether the exposed population is sufficient for adequate statistical power

- Confidence intervals — every metric claim in the doc is verified against the actual CI from Statsig. If the interval crosses zero, the claim is flagged as not statistically significant

This agent catches the kind of issues that are easy to miss in manual review — a doc claiming a metric improved when the CI includes zero, or an experiment called after only ten days when retention was a primary outcome.

Agent 2: the Data Engineer

The Data Engineer verifies the data pipeline feeding the experiment. It outputs a pass/fail checklist focused on two areas.

.png)

What it checks:

- Metric source health — whether any primary or secondary metrics show NULL or missing data. Smart about what not to flag: longer-horizon cohorted metrics (M2, M3) naturally show zero if the experiment hasn't run long enough for those cohorts to mature

- Exposure reconciliation — reviews the Metabase charts created during pre-filtering:

- Daily line chart — expected eligible users vs. actual exposed-and-active users per day. At full allocation, the two lines should overlap (>95% ratio)

- Cumulative chart — running distinct user totals, cross-checked against total Statsig exposure count

- Flags shape disagreements, ramp-up mismatches, persistent gaps at full allocation (which can indicate SDK initialization timing, version-detection mismatches, or data pipeline backfill delays), and any cases where actual exposed > expected eligible (impossible without a bug)

Agent 3: the Business Analyst

The Business Analyst stress-tests the revenue and business logic claims. It performs six checks and outputs a bullet list of verdicts with specific evidence.

.png)

What it checks:

- Metric claim verification — for each claim in the doc, finds the corresponding Statsig result and flags deltas >2pp or >5% relative difference

- Metric completeness — verifies the doc addresses all top-line company metrics

- Cannibalization and guardrails — checks if gains in one metric come at the expense of another. Flags any statistically significant negative guardrail

- Directional consistency — validates that related metrics move together (e.g., transaction count and revenue should be consistent)

- Revenue methodology — independently recomputes revenue impact from the doc's own inputs, including "2 cells" scaling for 50/50 splits (the treatment arm increment must be doubled to project full-rollout impact). Flags discrepancies and unvalidated assumptions

- Tradeoff consideration — checks whether both short-term and long-term impact were assessed

The agent is loaded with company-specific domain context — metric definitions, which directional movements are positive vs. negative, and how to interpret cohorted metrics for different experiment types.

What We Learnt (the hard way 😛)

This system did not come together magically at start. Here's what actually happened and learnings we had.

Iterate relentlessly on the prompt. The early versions of the agent proompts produced generic reviews that weren’t actionable. We went through dozens of iterations, each tightening the instructions based on cases where the output was wrong or vague.

Claude doesn't know your jargon. The Business Analyst agent kept flagging perfectly healthy metrics as concerning. A single line of explicit definition in the prompt — spelling out exactly what a term means in our company’s context — eliminated an entire class of false flags.

More data ≠ better reviews. In early runs we dumped the entire Statsig metric payload into every agent, which burned context window and confused the model with irrelevant data. Adding the pre-filter step, which felt like yak-shaving at the time, made agents dramatically more focused and accurate.

Impact

The AI Experiment Reviewer now runs on every experiment readout at Kikoff. It catches issues before a human reviewer looks at the doc — reducing the mechanical burden on DS reviewers and letting them focus on judgment calls.

See actual feedback from the team —

"Just experienced its impact flagging risks I missed then gave concrete advice on how to address them. Feels like we hired a DS whose only job is experiment review. Big unlock for experimentation accuracy + delivery."

The system is built entirely on Claude's skill and MCP infrastructure.

If your team runs experiments at scale and reviews are a bottleneck, the multi-agent pattern — domain-specialized agents running in parallel against a shared context bundle — is a powerful starting point.

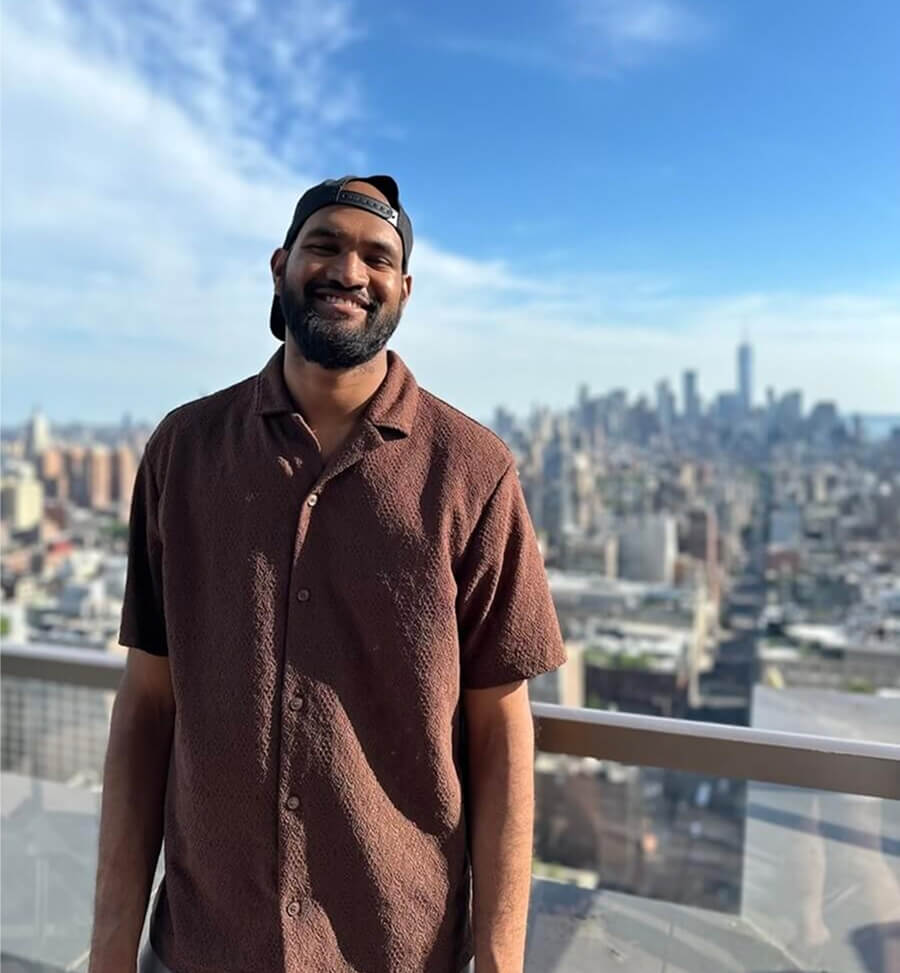

About the Authors